- Home

- Product

-

-

-

- Advisory

Gatekeeper Automate LLM, ML, and Agentic testing. Run pre-release test suites as a CI/CD release gate.

-

- Documentation

Guardian Monitor model performance, detect bias, and protect against adversarial attacks in real-time.

-

-

- Case Studies

-

- Open Source

-

-

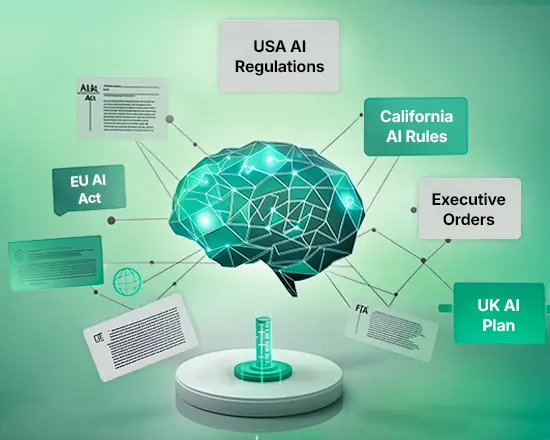

AI Policy Suite Access and utilize our curated collection of comprehensive, ready-to-deploy AI governance and safety policies.

-

MedHELM A comprehensive Stanford CRFM benchmarking project, built to evaluate LLMs on real-world clinical tasks.

-

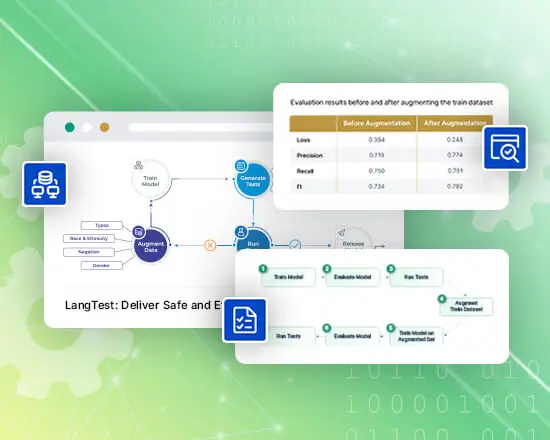

LangTest A comprehensive, unified testing library for measuring language model accuracy, bias, and robustness in LLM applications.

-

-

- Resources

- Contact Us