Executive Summary

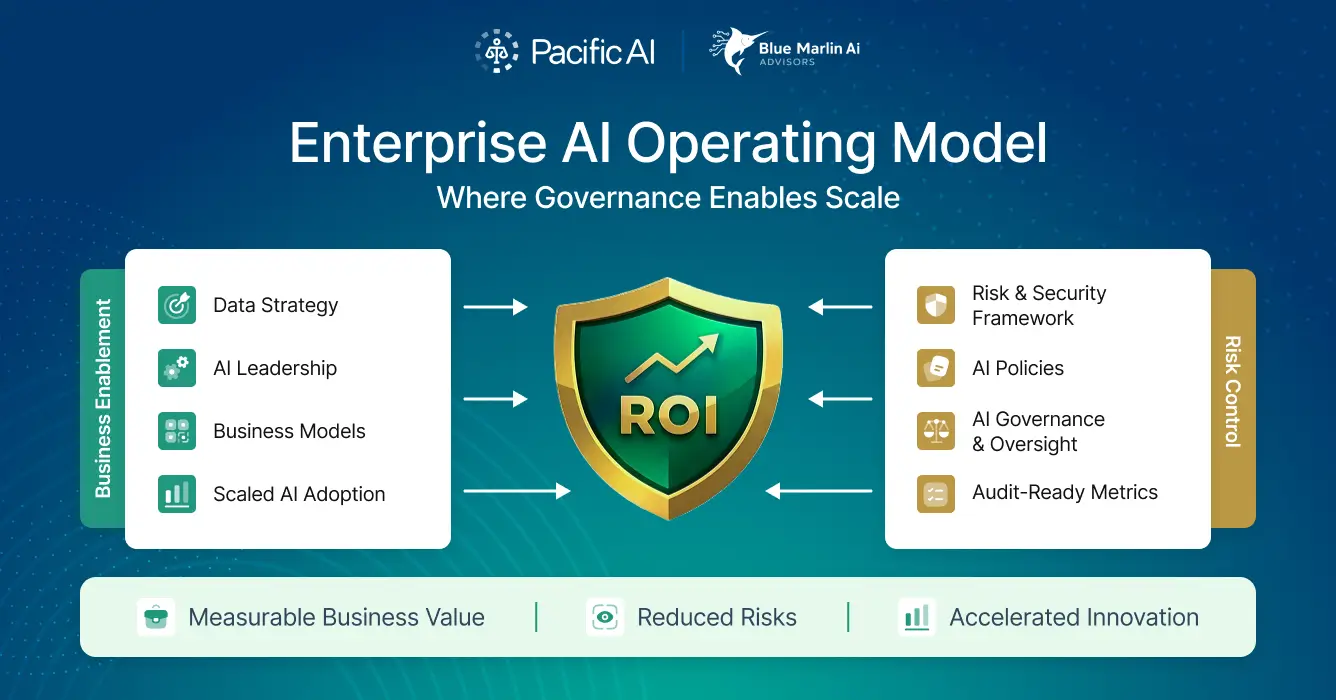

Enterprises are racing to deploy AI, but most organizations remain trapped between two extremes: experimentation without control, or compliance programs that slow innovation to a crawl. Regulatory pressure is rising globally, while executives simultaneously expect AI to deliver measurable productivity gains, new revenue, and competitive differentiation.

By combining Blue Marlin AI Advisor’s strategic consulting and enterprise adoption expertise with the Pacific AI Governance Platform — including its Policy Suite, Governor, and Guardian — organizations can move beyond checkbox compliance toward responsible, scalable, and value-generating AI.

Together, Blue Marlin and Pacific AI offer a “better together” approach that leads to:

- Faster speed to value

- Embedded governance that does not stall innovation

- Practical change management and program execution

- AI systems that are compliant out of the box and enterprises that are ready to use them

Leadership teams (CEO, CIO, CDO, GC, CRO) responsible for scaling AI are at an inflection point: Constantly changing AI regulations introduce uncertainty; legal or risk functions slow deployment due to unclear governance; many AI initiatives fail to gain adoption. Add heightened scrutiny from boards and investors, and balancing AI governance and gains becomes nearly impossible.

For enterprises that prioritize responsible, efficient, and transformational AI, this partnership bridges the gaps that prevent organizations from getting the most value out of their AI initiatives. It’s where ambition, regulatory expectations, and organizational readiness live in harmony — an imperative for organizations deploying or expanding AI in regulated or semi-regulated environments, particularly those transitioning from isolated pilots to production-level systems.

The Challenge: Compliance Alone is Not Enough

Most AI governance initiatives today are reactive. They focus on avoiding fines, passing audits, or satisfying regulators. While necessary, this approach creates three common pitfalls:

01 Governance without adoption Policies exist, but teams bypass them to get work done.

02 Controls without context AI systems are compliant, yet disconnected from business priorities.

03 Risk mitigation without ROI AI becomes a cost center instead of a growth engine.

At the same time, many AI programs fail because they underestimate the complexity of governance, legal exposure, and system-level risk. Enterprises don’t need another tool or another framework. They need an integrated operating model that unites governance, culture, workflows, and value creation.

A Unified Approach: Governance + Transformation

The partnership between Blue Marlin AI Advisors and Pacific AI addresses this need directly.

- Pacific AI Governance Platform ensures AI systems are compliant, safe, transparent, and auditable across their lifecycle. The platform operates as a live system, continuously testing, monitoring, and updating AI behavior in production as regulations, risks, and models evolve, something especially critical for generative and agentic AI systems.

- Blue Marlin AI Advisors enables enterprises to adopt, operationalize, scale, and measure AI through leadership alignment, workflow redesign, organizational change, and measuring real ROI.

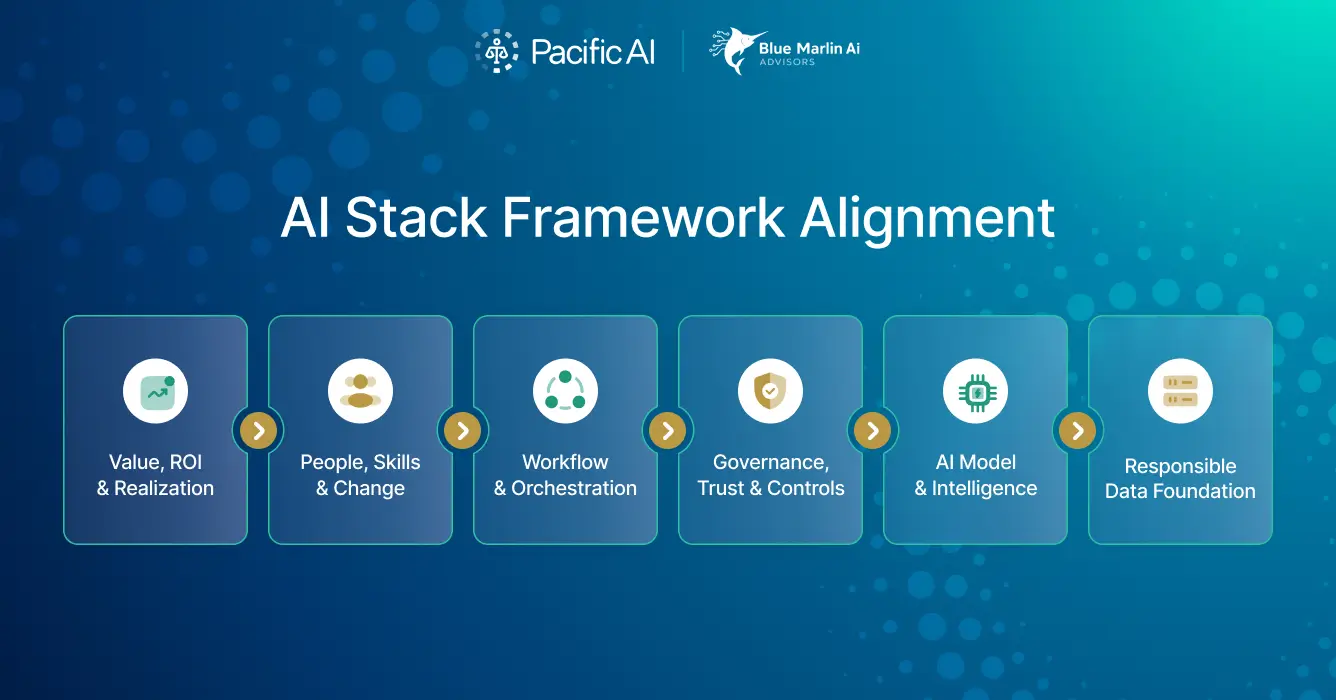

The AI Adoption Stack Framework

Rather than treating governance and adoption as parallel workstreams, Blue Marlin AI Advisors and the Pacific AI Governance Platform work together across six enterprise layers, which Blue Marlin has coined “The AI Adoption Stack Framework™” ensuring AI is compliant by design, usable in practice, and valuable in outcome.

Built from hundreds of enterprise engagements spanning energy, retail, services, and regulated industries, this framework clarifies the six foundational layers every organization must strengthen to successfully adopt AI at scale. When these layers function in harmony, AI becomes a disciplined operating capability that delivers trusted business value.

Consider four recent case studies of enterprise AI implementations. Two represent successful implementations aligned with the principles set forth by Pacific AI and Blue Marlin AI Advisors. Two other case studies reflect the serious consequences of rushing to implement AI without a solid foundation of responsible, governed data, the right change management approach, and a lack of understanding of potential implementation gaps that lead to embarrassing consequences impacting both customer view of the brand and employee trust in AI.

Real-World Context: Four Lessons from Recent Enterprise AI Deployments

The headlines of the past year tell a clear story. Enterprise AI success is not determined by model sophistication alone; rather, it is determined by how well governance, workflow redesign, leadership alignment, and risk controls are integrated into the operating model.

Two recent enterprise examples demonstrate what responsible, value-generating AI looks like when governance and transformation move in parallel. Two others highlight the consequences of accelerating deployment without sufficient controls or cultural readiness. Together, these cases reinforce why governance and enterprise adoption must be unified, not sequential, underscoring the need for a comprehensive approach.

Success Stories

Case Study 1: HSBC – Responsible AI Rollout in a Regulated Environment

In late 2025, HSBC announced a multi-year partnership to deploy generative AI capabilities across internal workflows, including analysis, translation, and document-heavy processes¹. As a global financial institution operating in heavily regulated markets, HSBC could not treat AI as a pilot experiment. Governance and compliance were foundational requirements.

Rather than deploying generative AI tools in an ad hoc manner, HSBC emphasized:

- Self-hosted model deployment to maintain data control¹

- Responsible AI governance commitments embedded into rollout¹

- Structured integration into defined workflows

- Clear executive sponsorship and risk alignment

This approach reflects alignment across multiple layers of the AI Adoption Stack™:

- Layer 1 (Data): Controlled hosting and data oversight

- Layer 2 (Model): Model selection aligned to internal decision workflows

- Layer 3 (Governance): Explicit responsible-AI commitments

- Layer 4 (Workflow): Integration into defined operational tasks

- Layer 6 (ROI): Productivity and cycle-time reduction as measurable outcomes

The organization did not treat compliance as a barrier to innovation; it treated governance as the prerequisite for scaling safely. As industry leaders emphasized at Davos, productivity gains from AI require structured investment in people and governance, not tool deployment alone⁶.

Case Study 2: Citigroup – AI Workforce Enablement at Scale

Citi built a distributed network of thousands of internal AI champions to drive adoption, training, and responsible usage across business units². Broader reporting on Wall Street banks highlights similar scaled internal AI initiatives focused on productivity gains³.

What Citigroup did right:

- Established peer-led AI accelerators²

- Integrated AI into daily workflows instead of isolating experimentation

- Invested in workforce literacy and structured usage patterns

- Framed AI productivity in measurable terms, particularly in technical and development teams³

Their approach maps directly to:

- Layer 5 (People, Skills, Change): Distributed enablement and cultural adoption

- Layer 4 (Workflow): Embedding AI into real business processes

- Layer 6 (ROI): Tracking measurable time savings and productivity gains

- Layer 3 (Governance): Creating local accountability rather than relying solely on policy

Technology didn’t drive adoption; leadership, incentives, and peer credibility did. Independent research suggests that many enterprises struggle with trust gaps in agentic AI initiatives when governance and workforce alignment are weak⁷. Citi’s distributed enablement model directly addresses that risk.

Failures

Case Study 3: Microsoft Copilot “Reprompt” Exploit – When AI Becomes the Attack Surface

In early 2026, a reported vulnerability allowed attackers to exploit Microsoft Copilot through a crafted prompt interaction that could trigger unintended data exposure⁹. Broader analysis warned that conventional cybersecurity frameworks alone are insufficient for AI-specific threat surfaces⁴.

The failure was not traditional cybersecurity negligence; it was a governance gap at the semantic layer.

Here’s what went wrong:

- An AI assistant inherited broad enterprise data permissions

- Insufficient guardrails around prompt-based manipulation

- Monitoring and behavioral testing lagged deployment velocity

Security analysts have warned that no-code AI agents and assistants can unintentionally expose enterprise data when governance and entitlement structures are not redesigned for AI access patterns⁵.

The failure to update governance specifically for AI access exposed weaknesses across:

- Layer 1 (Data): Over-permissioned data access

- Layer 2 (Model): Insufficient adversarial robustness testing

- Layer 3 (Governance): Inadequate continuous monitoring of generative behavior

- Layer 4 (Workflow): AI embedded into workflows without clear override or containment mechanisms

Even when patched quickly, incidents like this erode workforce trust and force reactive policy tightening. AI becomes perceived as risky rather than empowering.

Case Study 4: Enterprise Chatbot Exposure – Prompt Injection and Brand Risk

Recent enterprise chatbot incidents have demonstrated how prompt manipulation can result in exposure to sensitive information or reputational damage⁸. These cases often involve insufficient output filtering, weak acceptable-use constraints, and limited adversarial testing.

In this case, this was the result of:

- A lack of strict access controls and tool-call restrictions

- Weak output filtering and red teaming

- Insufficient human-in-the-loop escalation mechanisms

- Deployment ahead of structured risk-tier classification

These patterns reinforce findings that agentic AI initiatives frequently stall or backfire when trust mechanisms and governance controls are immature⁷.

The gaps reveal breakdowns across:

- Layer 2 (Model): Failure to stress-test adversarial behavior

- Layer 3 (Governance): Inadequate acceptable-use constraints

- Layer 4 (Workflow): No escalation or override structure

- Layer 5 (Enablement): Insufficient workforce understanding of AI limitations

As a result, public trust erodes quickly and employees become hesitant to rely on AI tools. Innovation slows under reactive compliance tightening.

Why These Cases Matter for Enterprise Leaders

Across these four examples, one truth emerges:

- Governance without adoption creates stagnation

- Adoption without governance creates exposure

- AI without measurable ROI becomes a cost center

Enterprises that succeed treat governance and transformation as a single operating model. They design data controls, model oversight, workflow architecture, workforce enablement, and ROI measurement as interconnected layers — not isolated initiatives.

This is the philosophy behind the AI Adoption Stack Framework™ and the Pacific AI Governance Platform. When deployed together, they don’t merely prevent failure. They create disciplined, defensible, and scalable AI capabilities.

Deep-Dive into the AI Adoption Stack™

Layer 1: Responsible Data Foundation

In practice, most AI failures trace back to data — not because controls are missing, but because ownership, incentives, and behaviors are misaligned. Pacific AI and Blue Marlin address this problem together by combining enforceable compliance with enterprise data maturity.

The Pacific AI Governance Platform’s Policy Suite operationalizes privacy-by-design requirements directly into data usage, enforcing consent, de-identification, and profiling controls at scale. These controls make it possible for organizations to confidently reuse data across AI initiatives without re-litigating risk every time a new use case emerges.

Blue Marlin complements this by working with business and data leaders to establish decision-ready data foundations: clarifying ownership, improving data quality accountability, and embedding risk-aware data practices into day-to-day operations. In real-world enterprise programs, this combination allows teams to move faster because compliant data is already trusted, governed, and culturally understood as a strategic asset.

Layer 2: AI Models and Intelligence

Enterprises often struggle to translate compliant models into meaningful business impact. Models may be technically sound, yet disconnected from real decisions, workflows, or value adds.

Through Pacific AI’s Governor and Guardian tools, lifecycle controls are embedded to ensure models meet safety, transparency, robustness, and fairness expectations throughout development and deployment. This is especially critical for generative and agentic AI systems. Unlike traditional models, these systems can exhibit non-deterministic behavior, goal drift, emergent bias, and unexpected interactions with users, tools, or other agents over time. By continuously monitoring, Pacific AI can detect safety, robustness, and alignment issues as they arise, not months later during an audit.

Blue Marlin builds on this foundation by aligning model choices to business priorities. Rather than asking “Is this model allowed?”Blue Marlin helps leaders answer “Is this the right model for this decision, at this level of risk, with this expected return?” In practice, this often means narrowing model scope, selecting simpler or more interpretable approaches where appropriate, and mapping models directly to value streams.

Layer 3: Governance, Trust, and Controls

Governance frameworks frequently fail because they remain static while organizations are dynamic. Policies exist, but accountability is unclear and adoption erodes over time.

Pacific AI establishes the formal backbone of governance: defined roles such as AI Governance Officer and Risk Manager, structured risk tiers, incident reporting, acceptable-use constraints, and audit-ready documentation. This creates clarity and defensibility at the system level. While other governance solutions exist on the market, most focus on preparing documentation for auditors. Pacific AI performs day-to-day system testing, live monitoring in production, and continuous updates of policies and tests as regulations change.

Blue Marlin activates governance inside the enterprise. By designing federated decision rights, leadership accountability models, and change-ready operating structures, governance becomes something leaders and teams actively use, not work around. This shifts governance from a compliance function to a leadership capability.

Layer 4: Workflow and Orchestration

AI is only as good as the work it streamlines. Yet many organizations deploy compliant AI systems into workflows that were never redesigned to accommodate automation or human oversight.

For AI embedded directly into enterprise workflows — particularly generative assistants and autonomous agents — Pacific AI provides live monitoring, override mechanisms, and behavioral testing in production environments. This ensures AI systems remain aligned with policy and intent even as prompts, data, and user behavior evolve.

Blue Marlin works upstream to redesign those workflows themselves, mapping processes, redefining roles, and establishing human-in-the-loop strategies that balance speed with accountability. In practice, this enables safe automation at scale. AI systems are governed within workflows that they were intentionally designed for.

Layer 5: People, Skills, and Change

AI adoption ultimately succeeds or fails based on people. Training alone is insufficient if leaders are misaligned and incentives remain unchanged. In other words, enablement depends on strong leadership, organizational understanding, and resources that help people adopt AI the right way.

Pacific AI establishes baseline governance literacy through mandated training requirements aligned to regulatory expectations, ensuring that employees understand their responsibilities when interacting with AI systems.

Blue Marlin extends this into true transformation, aligning leadership teams, developing workforce capabilities, and guiding behavior change. In real-world deployments, this ensures that governance knowledge translates into responsible action, not passive awareness.

Layer 6: Value, ROI, and Realization

Many organizations can prove their AI systems are compliant, but far fewer can prove they are worth the investment. Value, economics, and measurement are equally important for effective AI programs.

Pacific AI provides the documentation, evidence trails, and audit artifacts that allow AI systems to operate legally and safely, creating the conditions for value realization.

Blue Marlin defines and measures that value. Through leadership-based ROI models, CRIT™ (Context, Role, Interview, Task) value frameworks, adoption KPIs, and financial alignment, AI initiatives are tied directly to business outcomes. This ensures AI programs are not just approved, but sustained, scaled, and justified by measurable return.

Complementary Strengths

Blue Marlin AI Advisory

- Enterprise transformation expertise

- Proprietary adoption frameworks

- Deep experience in data, workflows, organizational design, and large-scale AI programs

- ROI-driven AI adoption

Pacific AI Governance Platform

- Best-in-class regulatory coverage and compliance rigor

- Strong safety, privacy, fairness, and audit methodology

- Clear lifecycle controls and third-party certification capabilities

- Free resources (AI Policy Suite) to democratize responsible AI adoption

Better Together

Pacific AI brings defensibility. Blue Marlin brings transformation, adoption, and value realization.

From Obligation to Advantage

Enterprise AI success is no longer about choosing between innovation and compliance. It is about integrating both into a single operating model. By unifying the Pacific AI Governance Platform with Blue Marlin AI Advisors’ enterprise adoption expertise, organizations can move from compliance as an obligation to governance as a competitive advantage.

For organizations facing regulatory pressure while seeking real AI impact, this collaborative framework provides a practical path forward. Whether starting with governance, adoption, or value realization, the combination of Pacific AI and Blue Marlin enables enterprises to deploy AI with confidence.

These entry points allow leaders to move quickly from obligation to action, without sacrificing rigor or speed. The future of enterprise AI belongs to those who govern it responsibly and transform deliberately. Why not get started today?

References

- ¹Reuters. (2025, December 1). HSBC taps French start-up Mistral to supercharge generative AI rollout.

https://www.reuters.com/business/finance/hsbc-taps-french-start-up-mistral-supercharge-generative-ai-rollout-2025-12-01/ - ²Business Insider. (2025, December). Citi has quietly built a 4,000-person internal AI workforce.

https://www.businessinsider.com/citi-bank-ai-accelerators-volunteers-2025-12 - ³Business Insider. (2025). Wall Street banks’ AI strategy: JPMorgan, Goldman, Citi, Bank of America.

https://www.businessinsider.com/wall-street-banks-ai-strategy-jpmorgan-goldman-citi-bofa-2025 - ⁴Dark Reading. (2025). Copilot and no-code AI agents could leak company data.

https://www.darkreading.com/application-security/copilot-no-code-ai-agents-leak-company-data - ⁵Harvard Business Review. (2026, January). Conventional cybersecurity won’t protect your AI.

https://hbr.org/2026/01/ts-research-conventional-cybersecurity-wont-protect-your-ai - ⁶Reuters. (2026, January 22). Firms must invest in real people to gain from AI, EY’s Teigland says.

https://www.reuters.com/business/davos/firms-must-invest-real-people-gain-ai-eys-teigland-says-2026-01-22/ - ⁷TechRadar Pro. (2025). Companies confess their agentic AI goals aren’t working out — and a lack of trust may be why.

https://www.techradar.com/pro/companies-confess-their-agentic-ai-goals-arent-really-working-out-and-a-lack-of-trust-could-be-why - ⁸The Verge. (2025). Microsoft should change its Copilot advertising, says watchdog.

https://www.theverge.com/news/688056/microsoft-copilot-advertising-watchdog-response - ⁹Windows Central. (2026). Copilot “Reprompt” exploit allowed data theft with a single click.

https://www.windowscentral.com/artificial-intelligence/microsoft-copilot/copilot-ai-reprompt-exploit-detailed-2026